The Rise of Edge AI: Unleashing Intelligence at the Device Frontier

Introduction: Where AI Meets the Real World – At the Edge

Imagine a world where your devices don’t just collect data, but instantly understand and act upon it, all without needing to send a single byte to the distant cloud. This isn’t science fiction; it’s the rapidly unfolding reality of Edge AI. As digital landscapes become increasingly saturated with smart devices, sensors, and interconnected systems, the demand for immediate, intelligent responses has never been greater. Traditional cloud-based AI, while powerful, often grapples with latency, bandwidth limitations, and privacy concerns when every millisecond counts. This is where AI at the edge steps in, revolutionizing how we interact with technology and paving the way for truly autonomous and responsive systems.

Edge AI represents a paradigm shift, moving artificial intelligence processing from centralized data centers closer to the source of data generation – the “edge” of the network. This on-device AI empowers everything from smart cameras performing real-time object detection to industrial robots predicting maintenance needs, all without constant internet connectivity. In this comprehensive guide, we’ll delve deep into the world of Edge AI, exploring its core concepts, significant Edge AI benefits, diverse Edge AI applications, and the Edge AI challenges that developers and businesses are actively addressing. We’ll also examine Edge AI vs cloud AI, uncover fascinating Edge AI use cases, discuss the underlying Edge AI technology, and glimpse into the exciting future of Edge AI. Get ready to unlock the potential of decentralized AI and understand why Edge machine learning is becoming the cornerstone of the next generation of smart devices.

What is Edge AI? Defining Intelligence Beyond the Cloud

At its heart, Edge AI is the deployment of AI algorithms and models directly on local devices rather than relying solely on remote cloud servers for processing. Think of it as giving your devices a brain of their own, enabling them to make intelligent decisions locally and in real-time. This concept is closely intertwined with Edge computing, a distributed computing framework that brings computation and data storage closer to the data sources, reducing latency and bandwidth usage.

The fundamental idea is simple: instead of sending raw data (like video footage from a security camera) to a distant cloud server for analysis, the camera itself, or a nearby Edge AI processor, performs the analysis. This could involve recognizing faces, detecting anomalies, or counting objects, all within milliseconds of the event occurring. This localized processing is crucial for low-latency AI applications where immediate action is paramount.

The Core Principles of AI at the Edge:

- Proximity to Data Source: AI processing occurs geographically closer to where data is generated, minimizing travel time for data.

- Reduced Latency: Eliminates the round trip to the cloud, enabling near real-time decision-making and action.

- Lower Bandwidth Consumption: Only processed insights or relevant events are sent to the cloud, drastically cutting down on data transmission.

- Enhanced Privacy and Security: Sensitive data can be processed and analyzed locally, reducing the risk of exposure during transit to the cloud. This fosters privacy-preserving AI.

- Offline Capability: Edge devices can continue to operate and make intelligent decisions even when internet connectivity is intermittent or unavailable.

The emergence of powerful yet energy-efficient Edge AI processors and advancements in tinyML (Tiny Machine Learning) are key enablers for this revolution. These specialized hardware components and optimized software frameworks allow complex AI models to run effectively on resource-constrained smart devices AI.

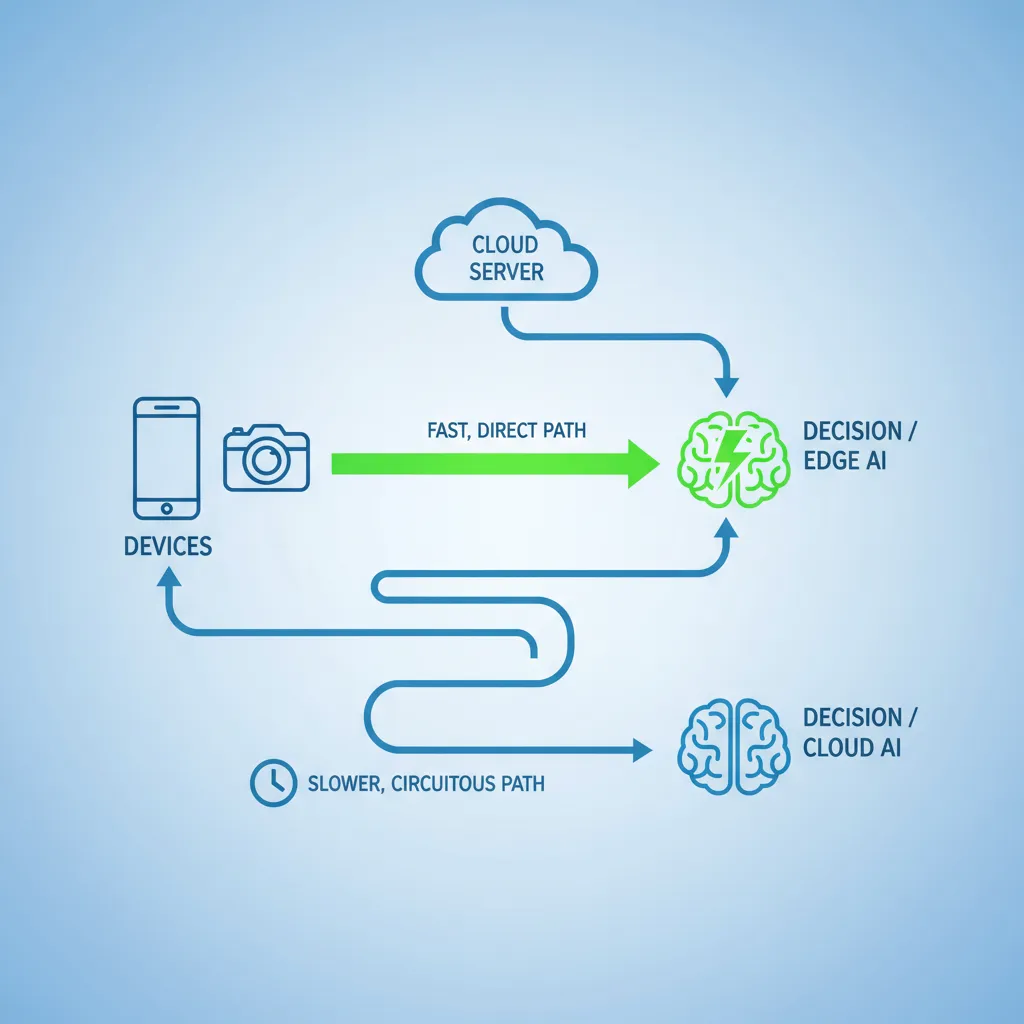

Edge AI vs. Cloud AI: A Tale of Two Architectures

Understanding the distinction between Edge AI and Cloud AI is crucial for appreciating the unique value proposition of intelligence at the device frontier. While they both leverage artificial intelligence, their architectural approaches and strengths differ significantly.

Cloud AI: The Centralized Powerhouse

Cloud AI relies on vast, centralized data centers with immense computational power.

- Strengths: Unparalleled processing capabilities for large-scale data, complex model training, and historical data analysis. Scalability is virtually limitless.

- Weaknesses: High latency due to data transmission over networks, significant bandwidth requirements, potential privacy concerns when data leaves local control, and reliance on constant internet connectivity.

Edge AI: The Decentralized Dynamo

Edge AI distributes processing closer to the data source.

- Strengths: Minimal latency, reduced bandwidth usage, enhanced data privacy and security, and reliable operation even without internet access. It’s ideal for real-time AI applications.

- Weaknesses: Limited computational resources compared to the cloud, often requiring optimized or smaller AI models, and challenges in model updates and management across a vast fleet of devices.

The reality, however, is not an either/or scenario. Many modern AI systems adopt a hybrid approach, leveraging the strengths of both. Edge AI solutions can preprocess data locally, sending only essential insights or filtered data to the cloud for further, deeper analysis or long-term storage. This collaborative model optimizes performance, cost, and security.

The Compelling Edge AI Benefits: Why It Matters

The shift towards AI at the edge isn’t merely a technological curiosity; it’s driven by a host of tangible advantages that are reshaping industries and user experiences.

1. Ultra-Low Latency and Real-time Processing

Perhaps the most significant benefit of Edge AI is its ability to deliver low-latency AI. By processing data locally, the time taken for data to travel to a cloud server, be processed, and for a response to return is virtually eliminated. This is critical for applications where milliseconds matter, such as autonomous vehicles needing to react instantly to road conditions, or industrial robots requiring immediate feedback for precision tasks.

2. Enhanced Data Privacy and Security

Sending sensitive data over public networks to the cloud raises significant privacy and security concerns. Edge AI addresses this by enabling privacy-preserving AI. Data can be processed and analyzed on the device itself, and only anonymized insights or aggregated results are shared, if at all. This minimizes the risk of data breaches and helps comply with stringent data protection regulations like GDPR. For example, a smart camera can detect suspicious activity without ever sending raw video footage of individuals to a remote server.

3. Reduced Bandwidth and Network Costs

As the number of connected devices explodes, the sheer volume of data generated can overwhelm network infrastructure and incur substantial bandwidth costs. Edge AI significantly reduces this burden. Instead of continuously streaming raw data, only critical events, alerts, or summarized information needs to be transmitted to the cloud. This makes Edge AI in IoT particularly impactful, as IoT deployments often involve thousands or millions of sensors generating constant data streams.

4. Offline Operation and Increased Reliability

Cloud connectivity isn’t always guaranteed, especially in remote locations, during natural disasters, or in scenarios where network infrastructure is unreliable. On-device AI ensures that intelligent systems can continue to function and make decisions even when disconnected from the internet. This resilience is vital for critical infrastructure, remote monitoring, and safety-critical applications.

5. Energy Efficiency and Sustainability

While training large AI models in the cloud consumes significant energy, running inference on optimized Edge AI processors can be remarkably energy-efficient. This is particularly relevant for battery-powered smart devices AI and large-scale IoT deployments, contributing to more sustainable technology solutions.

6. Scalability and Decentralized AI

Deploying AI models at the edge allows for a more scalable and decentralized AI architecture. Instead of funneling all data through a central point, intelligence is distributed across many devices, reducing bottlenecks and improving overall system resilience. This modularity simplifies scaling and management for large-scale deployments.

Diverse Edge AI Applications and Use Cases

The versatility of Edge AI means its applications span a vast array of industries, transforming operations and creating new possibilities. Here are some compelling Edge AI use cases:

1. Smart Cities and Infrastructure

- Traffic Management: Cameras with on-device AI can monitor traffic flow, detect accidents, and optimize signal timing in real-time AI to reduce congestion.

- Public Safety: Smart surveillance systems can identify unusual behavior or unauthorized access without sending continuous video streams to a central server, ensuring privacy-preserving AI.

- Waste Management: Sensors on bins use Edge machine learning to determine fill levels, optimizing collection routes and reducing operational costs.

2. Manufacturing and Industry 4.0

Edge AI in manufacturing is a game-changer for efficiency and predictive maintenance.

- Predictive Maintenance: Sensors on machinery analyze vibrations and temperature fluctuations using Edge AI platforms to predict equipment failure before it occurs, minimizing downtime and maintenance costs.

- Quality Control: AI-powered cameras inspect products on assembly lines in real-time, detecting defects with high accuracy and speed.

- Worker Safety: Wearable devices with Edge AI can monitor worker vitals and detect hazardous conditions, providing immediate alerts.

- Robotics: Industrial robots leverage low-latency AI for enhanced navigation, object manipulation, and collaborative tasks, improving factory automation.

3. Healthcare and Medical Innovation

Edge AI in healthcare is revolutionizing patient care and diagnostics.

- Remote Patient Monitoring: Wearable devices and home sensors use on-device AI to monitor vital signs, detect anomalies, and alert caregivers to potential issues, all while maintaining privacy-preserving AI.

- Assisted Diagnostics: Portable medical imaging devices can perform initial analyses using Edge machine learning, helping clinicians in remote areas make faster diagnoses. [Related: AI in Healthcare: Revolutionizing Patient Care and Medical Innovation]

- Elderly Care: Smart home sensors with Edge AI can detect falls or unusual patterns in daily routines, providing peace of mind for families.

- Smart Hospitals: Edge devices can manage inventory, track assets, and optimize patient flow within a hospital environment.

4. Autonomous Vehicles and Transportation

The future of autonomous driving heavily relies on Edge AI solutions.

- Real-time Decision Making: Autonomous vehicles process vast amounts of sensor data (Lidar, radar, cameras) locally to navigate, detect obstacles, and react to changing road conditions in milliseconds, crucial for real-time AI.

- Driver Monitoring: In-cabin cameras use on-device AI to monitor driver attention and detect drowsiness or distraction, enhancing safety.

5. Retail and Smart Stores

- Inventory Management: Edge AI can track product movement, monitor stock levels, and identify popular items, optimizing store operations.

- Personalized Customer Experiences: Digital signage can adapt content based on real-time crowd analysis, delivering targeted promotions.

- Loss Prevention: Smart cameras can detect shoplifting attempts or unusual behavior without constant human oversight.

6. Agriculture (AgriTech)

- Precision Farming: Drones and ground sensors use Edge AI to analyze crop health, soil conditions, and pest infestations, allowing for targeted intervention and resource optimization.

- Livestock Monitoring: Wearable sensors on animals can monitor health, behavior, and location, providing insights to farmers.

Navigating the Edge AI Challenges

While the Edge AI advantages are numerous, deploying and managing intelligence at the device frontier is not without its hurdles. Understanding these Edge AI challenges is vital for successful implementation.

1. Resource Constraints

Edge devices, especially those for tinyML applications, often have limited computational power, memory, and energy budgets. Developing and deploying efficient AI models that can run effectively within these constraints is a significant challenge. This requires specialized model optimization techniques, quantization, and efficient Edge AI processors.

2. Model Development and Optimization

Training complex AI models typically requires massive datasets and powerful cloud infrastructure. Adapting these models to run efficiently on resource-constrained edge devices demands expertise in model compression, pruning, and efficient inference techniques. The lifecycle of Edge machine learning models, from training to deployment and continuous updates, needs robust management.

3. Connectivity and Interoperability

While Edge AI reduces reliance on constant cloud connectivity, managing communication between edge devices, local gateways, and the cloud can be complex. Ensuring interoperability between diverse hardware, software, and communication protocols across a heterogeneous ecosystem is crucial for scalable Edge AI solutions.

4. Security and Data Integrity

While Edge AI enhances privacy by keeping data local, it also introduces new security vulnerabilities at the device level. Protecting the integrity of on-device AI models from tampering, securing data at rest and in transit, and managing device authentication are paramount. This is a critical aspect of Edge AI security.

5. Deployment and Management at Scale

Managing a large fleet of geographically dispersed edge devices, each running potentially different AI models, presents significant operational challenges. This includes remote deployment of updates, monitoring device health, troubleshooting issues, and ensuring consistent performance. Effective Edge AI development and robust Edge AI platforms are essential for addressing this at scale.

6. Data Governance and Compliance

Even with local processing, data generated at the edge may still be subject to various regulatory compliance requirements. Establishing clear data governance policies for what data is processed locally, what is aggregated, and what is sent to the cloud is crucial, especially for privacy-preserving AI.

Enabling Technologies: The Pillars of Edge AI

The rapid growth of Edge AI technology is underpinned by several key advancements:

1. Edge AI Processors and Hardware Accelerators

Dedicated Edge AI processors (like NPUs, TPUs, GPUs optimized for edge, and specialized ASICs) are designed for efficient AI inference with low power consumption. These chips are capable of handling the computational demands of neural networks directly on the device, often supporting tinyML frameworks.

2. Tiny Machine Learning (tinyML)

TinyML is a subfield of machine learning focused on bringing AI capabilities to extremely resource-constrained devices, often operating on milliwatts of power. It involves highly optimized models and specialized software to enable efficient Edge machine learning on microcontrollers and other embedded systems.

3. 5G and Advanced Connectivity

The advent of 5G networks, with their ultra-low latency and high bandwidth, complements Edge AI perfectly. While Edge AI reduces the need to send all data to the cloud, 5G can facilitate faster and more reliable communication between edge devices and local edge servers, enhancing distributed intelligence.

4. Machine Learning Operations (MLOps) for Edge

Effective MLOps strategies are crucial for managing the entire lifecycle of Edge AI solutions, from model development and deployment to monitoring, updates, and retraining across a distributed fleet of devices. This ensures the ongoing performance and reliability of on-device AI.

5. Edge AI Platforms and Frameworks

A growing ecosystem of Edge AI platforms and frameworks (e.g., TensorFlow Lite, OpenVINO, NVIDIA Jetson, AWS IoT Greengrass) simplifies the development, deployment, and management of AI at the edge. These platforms provide tools for model optimization, device integration, and remote management.

The Future of Edge AI: A Glimpse Ahead

The trajectory of Edge AI suggests a future where intelligence is pervasive, embedded into the fabric of our physical world. The future of Edge AI is not just about faster processing; it’s about creating a more responsive, secure, and autonomous environment.

We can anticipate:

- Hyper-Personalization: Devices will understand individual user preferences and contexts with unprecedented accuracy, providing truly personalized experiences directly on the device.

- Increased Autonomy: More devices, from robots to everyday appliances, will operate with greater independence, making complex decisions without human intervention or constant cloud oversight. This will accelerate the adoption of decentralized AI.

- Sophisticated Predictive Capabilities: Enhanced Edge machine learning will enable devices to not just react, but proactively anticipate needs and prevent issues across various domains, from smart homes to industrial infrastructure.

- Democratization of AI: As Edge AI development becomes more accessible through user-friendly tools and platforms, more developers will be able to build innovative Edge AI solutions, fostering a new wave of creativity.

- Convergence with Other Technologies: Edge AI will increasingly converge with technologies like augmented reality (AR), virtual reality (VR), and digital twins, creating immersive and highly intelligent environments. For instance, AR headsets using on-device AI could provide real-time contextual information overlaid on the physical world.

The role of Edge AI companies will expand, focusing on specialized hardware, robust software platforms, and end-to-end Edge AI solutions that cater to specific industry needs. The continuous evolution of Edge AI technology promises to unlock new frontiers of innovation, making our digital and physical worlds smarter, safer, and more efficient.

Conclusion: The Intelligent Frontier is Here

The rise of Edge AI marks a pivotal moment in the evolution of artificial intelligence. By bringing processing power and intelligence closer to the source of data, we are addressing critical limitations of traditional cloud-centric models – latency, bandwidth, and privacy. From enhancing operational efficiency in factories to enabling life-saving diagnostics in healthcare and powering the next generation of autonomous systems, the Edge AI advantages are undeniable and transformative.

While Edge AI challenges related to resource constraints, security, and large-scale management still exist, ongoing innovations in Edge AI processors, tinyML, and sophisticated Edge AI platforms are rapidly overcoming these hurdles. The future is bright for AI at the edge, promising a world where devices are not just connected, but truly intelligent, making decisions in real-time, respecting our privacy, and ultimately, making our lives better. Embracing Edge AI development today is not just an option; it’s a strategic imperative for any organization looking to thrive in an increasingly intelligent and interconnected world.

[Related: Unlock the Potential: AI Tools for Productivity & Creativity 2024]

FAQs About Edge AI

Q1. What is the main difference between Edge AI and Cloud AI?

The main difference lies in where the AI processing occurs. Cloud AI processes data in centralized data centers, whereas Edge AI processes data directly on local devices, closer to the source of data generation. This leads to differences in latency, bandwidth usage, and data privacy.

Q2. Why is Edge AI important for IoT devices?

Edge AI in IoT is crucial because it enables smart devices AI to process data locally, reducing the reliance on constant cloud connectivity, minimizing latency for real-time AI applications, and significantly cutting down on bandwidth consumption from transmitting raw sensor data. This makes IoT deployments more efficient, secure, and reliable.

Q3. What are the primary benefits of using Edge AI?

The primary Edge AI benefits include ultra-low latency processing, enhanced data privacy and security (enabling privacy-preserving AI), reduced bandwidth and network costs, reliable offline operation, and improved scalability for decentralized AI systems.

Q4. Are there any disadvantages or challenges with Edge AI?

Yes, Edge AI challenges include managing resource constraints on devices (e.g., limited processing power, memory, battery), optimizing complex AI models for efficient on-device AI, ensuring robust Edge AI security, and effectively deploying and managing a large fleet of geographically dispersed edge devices at scale.

Q5. What kind of applications are best suited for Edge AI?

Edge AI applications are best suited for scenarios requiring low-latency AI and real-time AI decision-making, such as autonomous vehicles, industrial automation, smart surveillance, remote patient monitoring in Edge AI in healthcare, and predictive maintenance in Edge AI in manufacturing. Any application benefiting from immediate local processing and enhanced data privacy is a strong candidate.

Q6. What is tinyML and how does it relate to Edge AI?

TinyML (Tiny Machine Learning) is a specialized field within Edge AI that focuses on enabling machine learning capabilities on extremely resource-constrained devices, often microcontrollers, with very low power consumption. It’s a key enabler for bringing on-device AI to a vast array of small, battery-powered smart devices AI.

Q7. How does Edge AI improve data security and privacy?

Edge AI security and privacy are improved because data can be processed and analyzed locally on the device itself. This minimizes the need to transmit sensitive raw data to the cloud, reducing exposure to potential breaches during transit and allowing for privacy-preserving AI where only aggregated or anonymized insights are shared.

Q8. What is the future outlook for Edge AI?

The future of Edge AI looks very promising, with expectations of increased autonomy in devices, deeper personalization, sophisticated predictive capabilities, and a further democratization of AI development. It will increasingly converge with other emerging technologies like 5G, AR, and digital twins to create more intelligent and responsive environments.