Llama 3: Meta’s New Open Source AI Explained

Introduction: The New Standard in Open-Source AI

The field of artificial intelligence is defined by breakneck innovation. Every few months, a new breakthrough shifts the landscape, but few releases carry the weight and potential impact of Meta’s latest offering: Llama 3.

Since its introduction, the Meta Llama 3 large language model (LLM) has not just captured headlines; it has fundamentally redefined what is possible in the open-source AI community. This isn’t just an incremental update to its predecessor, Llama 2—it’s a dramatic leap forward designed to compete directly with leading proprietary models, setting a new benchmark for performance, safety, and accessibility.

If you are a developer looking for the best open source LLM, a researcher tracking AI trends 2024, or simply curious about the engine powering the Meta AI assistant, understanding Llama 3 is crucial. This comprehensive guide will explain what is Llama 3, detail its innovative architecture, analyze the critical Llama 3 benchmarks, and provide practical steps on how to use Llama 3 today.

We will explore how this next generation AI model is influencing the landscape of AI development, examining its strengths, weaknesses, and what its open-source nature truly means for the future of machine learning models.

The Birth of a Titan: What is Meta Llama 3?

Llama 3 is Meta’s most capable and advanced family of AI models to date, released with an aggressive open-source philosophy. The Llama 3 release included two primary versions: a compact 8-billion parameter model (Llama 3 8B) and a powerful 70-billion parameter model (Llama 3 70B). These models are optimized for a wide range of tasks, from rapid code generation to sophisticated conversational AI.

The defining characteristic of Llama 3, much like its predecessors, is its commitment to open-source AI. While many powerful LLMs remain closed behind proprietary APIs, Meta releases the weights and training methods for Llama 3 to the public. This empowers researchers, startups, and developers globally to build upon, customize, and deploy the technology without stringent licensing barriers—a massive advantage for accelerating innovation.

This move reinforces Meta’s commitment to driving the open ecosystem, ensuring that cutting-edge natural language processing tools are democratized and not siloed by a few major tech companies.

Key Innovations Driving Llama 3’s Performance

The superior performance of Meta’s new AI model isn’t just magic; it’s the result of significant upgrades in training, data quality, and architecture.

1. Unprecedented Training Data Volume

Llama 3 was trained on a massive dataset of over 15 trillion tokens. To put that into perspective, that is roughly seven times the size of the dataset used for Llama 2. Crucially, Meta focused heavily on filtering and data quality, ensuring the training set was diverse, high-quality, and included a large portion of code data, which significantly boosted the model’s performance in logical reasoning and coding tasks.

2. Enhanced Tokenizer and Context

One of the subtle yet impactful changes is the adoption of a much larger vocabulary size: 128,000 tokens, up from 32,000 in Llama 2. A larger tokenizer improves the efficiency with which the model processes text, especially across multiple languages. This enhancement directly contributes to better performance, lower latency, and greater accuracy in complex tasks.

3. Instruction Fine-Tuning and Alignment

Llama 3 utilizes a refined methodology for instruction fine-tuning, involving a combination of supervised fine-tuning (SFT), rejection sampling, and Proximal Policy Optimization (PPO). This rigorous alignment process ensures the models are safer, more helpful, and better at following complex, multi-step instructions, making them highly effective for applications like the Meta AI assistant.

[Related: Mastering Generative AI: Next-Gen Content Creation]

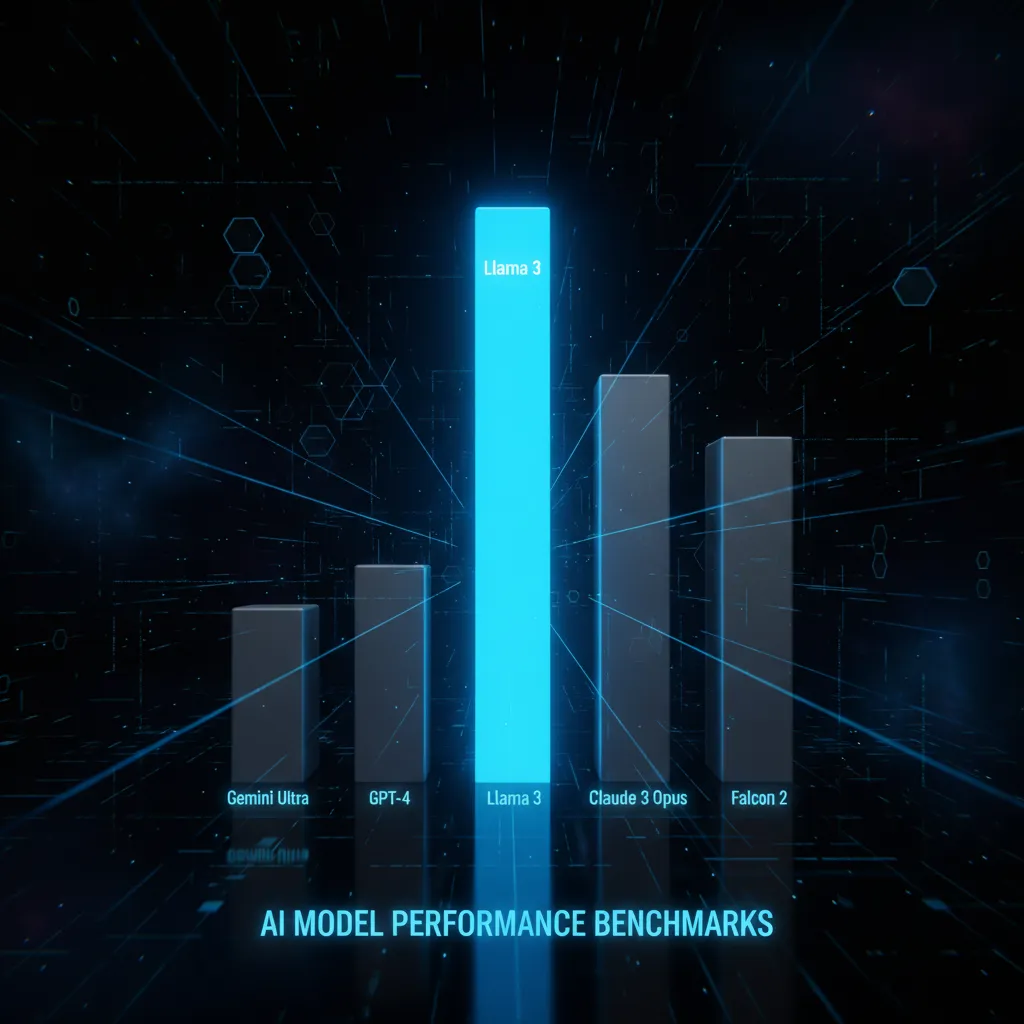

Llama 3 Benchmarks: How It Stacks Up Against the Competition

When evaluating any large language model, benchmarks are the ultimate test. The critical question for most users is: how does Llama 3 perform, especially in the context of Llama 3 vs GPT-4?

The initial Llama 3 benchmarks released by Meta demonstrated that the 70B parameter model is highly competitive, often outperforming proprietary models like GPT-3.5 and Claude 3 Haiku across standard industry evaluations. Furthermore, it firmly established itself as the leading force among all currently available open-source AI machine learning models.

A Closer Look at Performance Metrics

The table below summarizes Llama 3’s performance across critical domains like reasoning, coding, and common sense understanding, comparing the 8B and 70B variants against key rivals.

| Benchmark | Model | Llama 3 8B (Score) | Llama 3 70B (Score) | GPT-3.5 (Score) | Claude 3 Haiku (Score) |

|---|---|---|---|---|---|

| MMLU (Massive Multitask Language Understanding) | General Knowledge | 66.6 | 81.5 | 70.9 | 79.0 |

| GSM8K (Grade School Math) | Reasoning/Math | 81.3 | 94.0 | 92.5 | 92.1 |

| HumanEval (Code Generation) | Coding/Programming | 62.2 | 81.7 | 77.8 | 75.2 |

| DROP (Reading Comprehension) | Fact Retrieval | 74.9 | 85.1 | 80.9 | 79.5 |

| ARC-Challenge (Science Reasoning) | Advanced Reasoning | 79.6 | 93.3 | 87.8 | 91.5 |

(Scores are based on initial Meta and community evaluations; higher scores indicate better performance.)

As the data shows, the Llama 3 70B model is a formidable challenger. It excels particularly in coding (HumanEval) and complex reasoning (GSM8K, ARC-Challenge), areas where previous open models struggled.

Llama 3 vs GPT-4: The Open vs. Closed Debate

While Llama 3 70B can, in many instances, rival or exceed the performance of GPT-3.5, it is important to address the comparison with the current gold standard: GPT-4.

- GPT-4 Advantage: Proprietary models like GPT-4 often maintain an edge in highly complex, multi-faceted tasks, especially those requiring extremely deep contextual understanding, very large context windows (which are continually expanding), and advanced native multimodal capabilities (understanding images and audio seamlessly).

- Llama 3 Advantage: Llama 3 wins on accessibility, customization, and cost. Since you can download Llama 3 and run it locally or deploy it on your own infrastructure, developers gain complete control over data privacy and fine-tuning. This is critical for businesses operating in regulated environments. Furthermore, Llama 3 is arguably the best open source LLM, giving it a massive lead in the democratization of powerful AI.

The competition is no longer about one model beating all others; it’s about providing the best tool for the job. For developers who need maximum flexibility and transparency, Llama 3 is now the uncontested leader.

Understanding the Open-Source Philosophy of Meta AI

The decision by Meta to release Llama 3 as an open-source AI model is one of the most important developments in recent artificial intelligence news. It’s a strategic move with far-reaching implications for the entire tech industry.

Open source doesn’t just mean free; it means the full weights and architecture are available for inspection, modification, and deployment. This commitment accelerates AI development in several critical ways:

1. Democratizing Access to Power

Before Llama 3, only organizations with billions of dollars in funding could train truly powerful LLMs. Now, with the Llama weights available, smaller companies, academic researchers, and individual developers within the open source AI community can access a foundational model that performs near the state-of-the-art. This levels the playing field, making advanced natural language processing technology accessible to everyone.

2. Accelerating Safety and Research

By opening the model, Meta allows thousands of external researchers to scrutinize the code, identify biases, test for vulnerabilities, and propose safety improvements. This collective oversight model is vastly more effective than relying solely on an internal team. The transparency fostered by Llama 3’s open nature contributes significantly to responsible AI development.

3. Fostering Innovation Through Fine-Tuning

The ability to easily and legally perform fine-tuning Llama 3 on proprietary data sets is a game-changer for enterprises. A healthcare provider might fine-tune Llama 3 on medical journals, or a legal firm might fine-tune it on case law. This specialization allows companies to create highly optimized, domain-specific machine learning models that far exceed generic capabilities.

[Related: The Rise of Edge AI: Unleashing Intelligence at the Device Frontier]

The Impact on the Open Source AI Community

The release of Llama 3 has energized the open source AI community. Frameworks like Hugging Face immediately integrated the models, leading to rapid iteration, quantization (making models smaller and faster), and new tool development. This collaborative momentum is one of the most exciting AI trends 2024 and beyond.

Llama 3 in Action: Features and Use Cases

The power of Llama 3 translates into tangible, real-world utility across several applications. Beyond general knowledge and chat, its specific Llama 3 features make it a strong tool for both consumer and enterprise needs.

Powering the Meta AI Assistant

Perhaps the most visible use of Llama 3 is as the engine behind the Meta AI assistant integrated across Meta’s platforms: Facebook, Instagram, WhatsApp, and Ray-Ban Meta Smart Glasses. This integration transforms these apps into powerful information and creation tools. The assistant leverages Llama 3’s advanced conversational AI capabilities to:

- Real-time information retrieval: Answer complex queries based on current web data.

- Creative generation: Instantly generate text, code, or even images (using its companion image model).

- Contextual tasks: Summarize long chats or draft emails directly within the messaging interface.

Advanced Reasoning and Coding

Llama 3’s deep training on code and logic datasets means it excels where Llama 2 sometimes faltered: complex reasoning and generating robust, functional code.

- Code Generation: Developers find Llama 3 highly effective for generating boilerplate code, debugging existing code, and translating between programming languages.

- Logical Problem Solving: The model shows improved ability to tackle multi-step math problems and complex logical puzzles, making it valuable for research and data analysis tasks.

Llama 3 API and Enterprise Deployment

For enterprises, the existence of the Llama 3 API (offered through various cloud providers) combined with the ability to download Llama 3 and self-host offers unprecedented flexibility.

- Cost Efficiency: Running Llama 3 on your own infrastructure can be significantly cheaper than relying on metered usage of proprietary APIs, especially at high scale.

- Security and Compliance: Deploying the model behind a company firewall ensures that sensitive data never leaves the organization’s control, satisfying strict regulatory requirements like HIPAA or GDPR.

Practical Guide: How to Use and Download Llama 3

One of the most exciting aspects of Llama 3 is the ease of access for developers and enthusiasts. You have several options for accessing and running Llama 3 locally, depending on your technical comfort and hardware capabilities.

Method 1: Instant Access via Meta AI Assistant

For general users interested in interacting with the Meta Llama 3 model, the easiest way is through the Meta AI assistant within WhatsApp, Instagram, or Facebook. This offers a seamless, free-to-use interface for chat, creativity, and searching.

Method 2: Cloud Access (Llama 3 API)

Most major cloud providers (AWS, Google Cloud, Microsoft Azure) quickly integrated Llama 3 into their platforms. This is the ideal route for businesses needing scalability, high availability, and easy integration with existing cloud services.

- Advantages: No need to manage hardware; guaranteed uptime; pay-as-you-go scaling.

- Implementation: Access the model via the vendor’s machine learning service console and call the Llama 3 API endpoint.

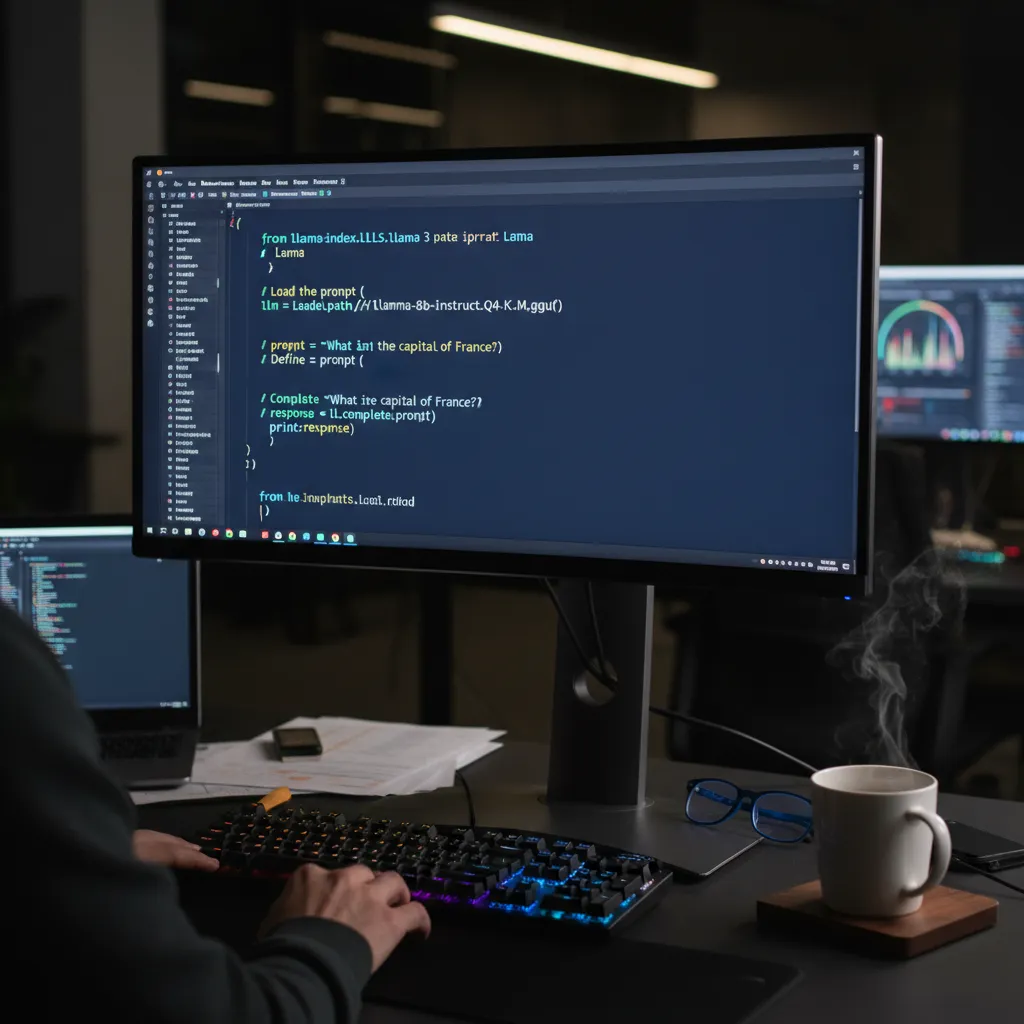

Method 3: Running Llama 3 Locally (The Developer’s Choice)

For true customization, privacy, and full control, developers will want to download Llama 3 weights and run them locally. This requires suitable hardware (a dedicated GPU, especially for the 70B model) and specialized tooling.

Step-by-Step for Local Deployment (Using Ollama)

Ollama is a popular, user-friendly tool that simplifies the process of running Llama 3 locally on macOS, Linux, or Windows (via WSL).

- Install Ollama: Download and install the Ollama client for your operating system.

- Pull the Model: Open your terminal and use a simple command to pull the desired version of Llama 3. The Llama 3 8B model is manageable on many consumer-grade GPUs (8GB VRAM or more), while the 70B model requires significant VRAM (often 64GB or more).

ollama run llama3:8b

```

3. **Interact:** Once the model is downloaded, the terminal becomes your chat interface. You can immediately begin using the model for code generation, text completion, or complex queries.

#### The Llama 3 Tutorial: Programmatic Access

For developers looking to integrate Llama 3 into custom applications, the official Python libraries or specialized frameworks offer robust access. This is essential for those who need to perform **fine-tuning Llama 3** for specific tasks.

```python

# Example of using Llama 3 via a basic API client (e.g., using Hugging Face transformers or a self-hosted endpoint)

import requests

def query_llama3(prompt):

# This URL would point to your local or cloud-hosted Llama 3 API endpoint

API_URL = "http://localhost:11434/api/generate"

payload = {

"model": "llama3:8b",

"prompt": prompt,

"stream": False

}

response = requests.post(API_URL, json=payload)

if response.status_code == 200:

return response.json().get('response')

else:

return f"Error: {response.status_code}"

# Example Use

code_prompt = "Write a Python function to check if a number is prime."

response = query_llama3(code_prompt)

print(response)

This programmatic access demonstrates the versatility of the Llama 3 API and provides a clear pathway for utilizing the model in professional AI development workflows.

[Related: Best New AI Tools for Productivity and Creativity]

The Road Ahead: The Future of Meta’s AI Models

The release of Llama 3 is not an endpoint but a milestone in Meta’s aggressive pursuit of next generation AI. Looking ahead, the roadmap suggests even more powerful, versatile models are coming.

The Larger Models

While the 8B and 70B models marked the initial Llama 3 release, Meta has confirmed plans for a larger model—potentially exceeding 400 billion parameters. This mega-model would be trained on even larger datasets and aim for parity or superiority over the absolute top-tier proprietary models.

Multimodality and Context Windows

Future iterations of Llama 3 are expected to fully embrace multimodality, meaning the models will be natively capable of understanding and generating content across text, images, audio, and video, moving far beyond traditional natural language processing. Additionally, there is a focus on extending the context window, allowing the models to maintain highly detailed conversations and process massive documents, a critical capability for specialized applications.

The sustained momentum behind Llama, coupled with Meta’s commitment to the open source AI community, means that the pace of innovation in this sector is unlikely to slow down. This continuous cycle of improvement solidifies Llama 3’s position as a core piece of artificial intelligence news and a driving force behind future AI trends 2024.

Conclusion: Llama 3 Defines the Open AI Landscape

Llama 3 represents a pivotal moment for the industry. By delivering a performance profile that challenges proprietary giants like GPT-4, and doing so with open weights, Meta has given an unparalleled gift to the global community of researchers and developers.

The two main variants, Llama 3 8B and Llama 3 70B, offer scalable solutions for everything from localized applications (running Llama 3 locally) to large-scale, enterprise-grade cloud deployments. Its success in key Llama 3 benchmarks demonstrates that the era of closed AI models having a massive, uncontested lead is rapidly drawing to a close.

Whether you are utilizing the advanced conversational AI within the Meta AI assistant, performing complex fine-tuning Llama 3 for a niche industry, or simply exploring the future of machine learning models, Llama 3 is the foundational technology that will drive the next wave of innovation. The message is clear: the open-source community now has the most powerful tool available to shape the future of AI development.

Start experimenting today. The opportunity to contribute to and benefit from the best open source LLM is waiting.

FAQs (People Also Ask)

Q1. What is Llama 3, and who created it?

Llama 3 is a large language model (LLM) created and released by Meta AI (Meta Platforms, Inc.). It is the successor to Llama 2 and represents a significant advance in performance, particularly in reasoning and code generation. It is primarily an open-source AI model, meaning its weights and architecture are publicly available for developers to download Llama 3 and use.

Q2. Is Llama 3 better than GPT-4?

When comparing Llama 3 vs GPT-4, the answer depends on the specific use case. The powerful Llama 3 70B model significantly outperforms models like GPT-3.5 and leads all current open-source models in independent Llama 3 benchmarks. However, proprietary models like GPT-4 (especially the latest versions) may still hold an advantage in extremely complex reasoning tasks, advanced multimodality, and very long context processing. Llama 3’s major advantage is its open-source nature, offering greater flexibility and privacy.

Q3. How can I access and use Llama 3?

There are three primary ways to access Llama 3 features:

- Meta AI Assistant: Interact with it directly through Meta’s consumer apps (WhatsApp, Instagram, Facebook).

- Cloud APIs: Access the Llama 3 API through major cloud platforms like AWS, Google Cloud, and Azure.

- Local Deployment: Download Llama 3 weights and run them on your local hardware using tools like Ollama or the official Hugging Face transformers library for full control and fine-tuning Llama 3.

Q4. What are the two main versions of Llama 3?

The initial Llama 3 release included two main pretrained and instruction-fine-tuned versions:

- Llama 3 8B: An 8-billion parameter model, designed for quick inference, resource-constrained environments, and efficient running Llama 3 locally on consumer devices.

- Llama 3 70B: A 70-billion parameter model, offering near-state-of-the-art performance for complex tasks requiring high reasoning and output quality.

Q5. What is the main difference between Llama 3 and Llama 2?

The primary differences are training scale and performance. Llama 3 was trained on a dataset seven times larger (15 trillion tokens) than Llama 2, leading to vastly improved reasoning, instruction following, and accuracy across standardized benchmarks (like MMLU and HumanEval). Additionally, Llama 3 uses a larger 128K token vocabulary for more efficient processing, marking a clear generational leap in AI development.

Q6. Can Llama 3 generate code?

Yes, Llama 3 is highly capable of generating code. Due to its extensive training on code-specific datasets, the Llama 3 70B model ranks exceptionally high on coding benchmarks like HumanEval, often outperforming many closed source models in this domain. It is an excellent tool for code completion, debugging, and generating scripts across various programming languages.

Q7. What does ‘open weights’ mean for Llama 3?

The term ‘open weights’ means that Meta has publicly released the numerical values (the parameters or weights) that the AI model learned during its training process. This allows anyone to download Llama 3, inspect how it functions, deploy it anywhere they choose (including privately on their own servers), and legally modify or fine-tuning Llama 3 for specific tasks, thereby supporting the open source AI community.