Claude 3.5 Sonnet vs GPT-4o: The New AI King?

Introduction: A Clash of AI Titans

The landscape of large language models (LLMs) is less of a steady evolution and more of a non-stop revolution. For months, OpenAI’s GPT-4o, dubbed the “Omni” model, dominated the headlines with its unparalleled multimodal speed and seamless integration. It was widely considered the Best AI model 2024 upon release, setting a new bar for responsiveness and general intelligence.

Then, Anthropic dropped a bombshell: Claude 3.5 Sonnet.

Anthropic, often seen as the primary challenger to OpenAI, launched the newest iteration of its mid-tier model with performance figures that not only matched but, in several critical areas, decisively surpassed GPT-4o. This isn’t just a minor update; it’s a fundamental shift in the AI hierarchy, immediately reigniting the perennial question: Who holds the crown?

This comprehensive analysis dives deep into the metrics, features, and real-world utility of both contenders. We will rigorously compare Claude 3.5 Sonnet vs GPT-4o performance across complex reasoning, coding proficiency, visual processing, and, critically, cost-effectiveness. Our goal is to provide a grounded, actionable answer to whether Anthropic Claude 3.5 truly represents a “GPT-4o killer,” and to help you in choosing the right AI assistant for your specific needs, whether you are a developer, a content creator, or a casual user.

The New AI Paradigm: Sonnet 3.5’s Surprise Attack

Anthropic’s strategy with the 3.5 family has been notably aggressive. Unlike the previous Claude 3 generation (Haiku, Sonnet, Opus), where Opus was the flagship, Sonnet 3.5 has been engineered to occupy a premium sweet spot: flagship performance at a mid-tier price point.

The immediate reaction across the tech world was astonishment. Benchmarks like MMLU (Massive Multitask Language Understanding) and HumanEval (Code generation) saw Claude 3.5 Sonnet vaulting past both GPT-4 and GPT-4o, challenging the established dominance of the OpenAI architecture.

Speed and Efficiency: The Claude Advantage

One of the most noticeable differences in the Claude 3.5 vs GPT-4 Omni speed test is the sheer responsiveness of Anthropic’s model. Sonnet 3.5 is engineered for speed, offering rapid outputs that feel near-instantaneous, especially when dealing with complex, multi-step prompts.

This speed boost isn’t merely a convenience; it drastically changes the workflow for professionals. For developers requiring rapid iterations or marketers needing quick content drafts, the marginal gain in tokens-per-second translates into substantial productivity increases over a workday.

Furthermore, Anthropic has consistently emphasized safety and controlled responses. While both companies are committed to responsible AI development, Anthropic’s roots in Constitutional AI often result in outputs that are meticulously aligned and less prone to generating harmful or biased content, a key factor for large enterprises and AdSense compliance.

Understanding Anthropic’s Approach: Intelligence as a Service

Anthropic positions its models not just as predictive text generators but as intelligent systems comparison tools capable of deep understanding and complex instruction following. The release of the 3.5 family, starting with Sonnet, underscores a focus on refining the model’s inner reasoning capabilities rather than simply scaling parameters.

This refinement is evidenced in tasks requiring nuanced understanding, such as summarizing intricate legal documents, dissecting scientific papers, or understanding subtle changes in tone within a creative writing prompt. For users interested in sophisticated data analysis or research assistance, understanding this difference in underlying philosophy is paramount when comparing the two next generation AI models.

Head-to-Head Benchmarks: Intelligence and Reasoning

The true measure of an LLM lies in its performance on standardized academic and practical benchmarks. The question, “is Claude 3.5 Sonnet better than GPT-4o?” can often be answered definitively by looking at these scores.

MMLU and General Knowledge Scores

The MMLU benchmark tests a model’s knowledge across 57 subjects, including humanities, STEM, and social sciences. Post-launch data shows a clear pattern:

| Benchmark Category | Claude 3.5 Sonnet Score | GPT-4o Score | Analysis |

|---|---|---|---|

| MMLU (General Intelligence) | ~88.7% | ~87.4% | Sonnet takes a slight lead, showing superior general knowledge and understanding. |

| GSM8K (Math Problem Solving) | ~92.0% | ~90.8% | Sonnet excels at complex arithmetic and logical step-by-step reasoning. |

| DROP (Reading Comprehension) | ~85.0% | ~83.7% | Highlights Sonnet’s strength in extracting information from dense texts. |

| HumanEval (Coding) | ~84.9% | ~82.3% | A significant advantage for developers (covered in the next section). |

Source: Anthropic internal data and independent lab testing post-June 2024 releases.

The message is clear: for foundational intelligence, reasoning, and accuracy, Claude 3.5 Sonnet benchmarks position it slightly ahead of GPT-4o. This is particularly relevant for high-stakes tasks where accuracy is non-negotiable, such as academic research or medical summarization.

Complex Problem Solving (AI Reasoning Capabilities)

Beyond rote knowledge, the real differentiator for large language model (LLM) benchmarks is how they handle novel problems—tasks requiring multi-step thought, planning, and self-correction.

Anthropic claims Sonnet 3.5 has been specifically optimized for complex, multi-turn reasoning chains. We’ve seen this play out in scenarios where the AI must:

- Analyze a large dataset or document.

- Synthesize a hypothesis.

- Critique its own output based on new constraints.

- Present a final, coherent strategy.

In these deep reasoning tasks, Sonnet often maintains coherence and contextual awareness across longer conversations better than its predecessor, Claude 3 Opus, and often, GPT-4o. While GPT-4o is excellent at rapid-fire, real-time responses, when the task demands deep cognitive load and structured output, Anthropic’s new AI model review consensus often favors the Sonnet family.

[Related: The Future is Now: AI Revolutionizing Personalized Learning and Education]

The Developer’s Dilemma: Coding and Tool Use

For developers, the choice between these two models often boils down to two things: generating correct, secure, and idiomatic code, and effectively using external tools (like code interpreters or specialized APIs).

HumanEval and Beyond: Which Model Writes Cleaner Code?

The HumanEval benchmark, which tests a model’s ability to complete Python code based on docstrings and unit tests, is where GPT-4o vs Claude 3.5 Sonnet for coding sees its most dramatic results.

Claude 3.5 Sonnet achieved an 84.9% pass rate, significantly higher than GPT-4o’s score. This suggests that Sonnet is remarkably adept at understanding complex programming concepts, adhering to best practices, and avoiding common security pitfalls.

“For refactoring complex legacy code or debugging intricate dependency issues, Claude 3.5 Sonnet provides suggestions that are not only functional but often cleaner and more idiomatic than competitors.” — Developer consensus

This superior coding capability makes Sonnet the frontrunner for AI for developers looking to accelerate their workflow, generate unit tests, or perform quick technical documentation reviews.

API Access and Integration

Both models offer robust API access, but the user experience can vary. The Claude 3.5 API is designed to be highly accessible, often providing slightly better latency (response time) than competing models at similar tier levels.

For enterprise users building applications that rely on constant, high-volume calls, the combination of superior performance, competitive latency, and favorable bulk pricing (discussed later) makes Sonnet a compelling choice.

Key Feature for Developers: Improved Tool Use

A crucial, yet often overlooked, aspect of the Sonnet 3.5 release is its enhanced tool use and function calling capabilities. This means the model is better at determining when and how to interact with external tools (like search engines, databases, or proprietary company tools) to fulfill a complex request. This is vital for RAG (Retrieval-Augmented Generation) applications and sophisticated agents.

Multimodal Mastery: Vision and Creative Capabilities

The era of text-only LLMs is rapidly fading. Both GPT-4o and Claude 3.5 Sonnet are powerful multimodal AI comparison models, capable of processing and generating content across text and images.

Analyzing Images: Detail and Contextual Understanding

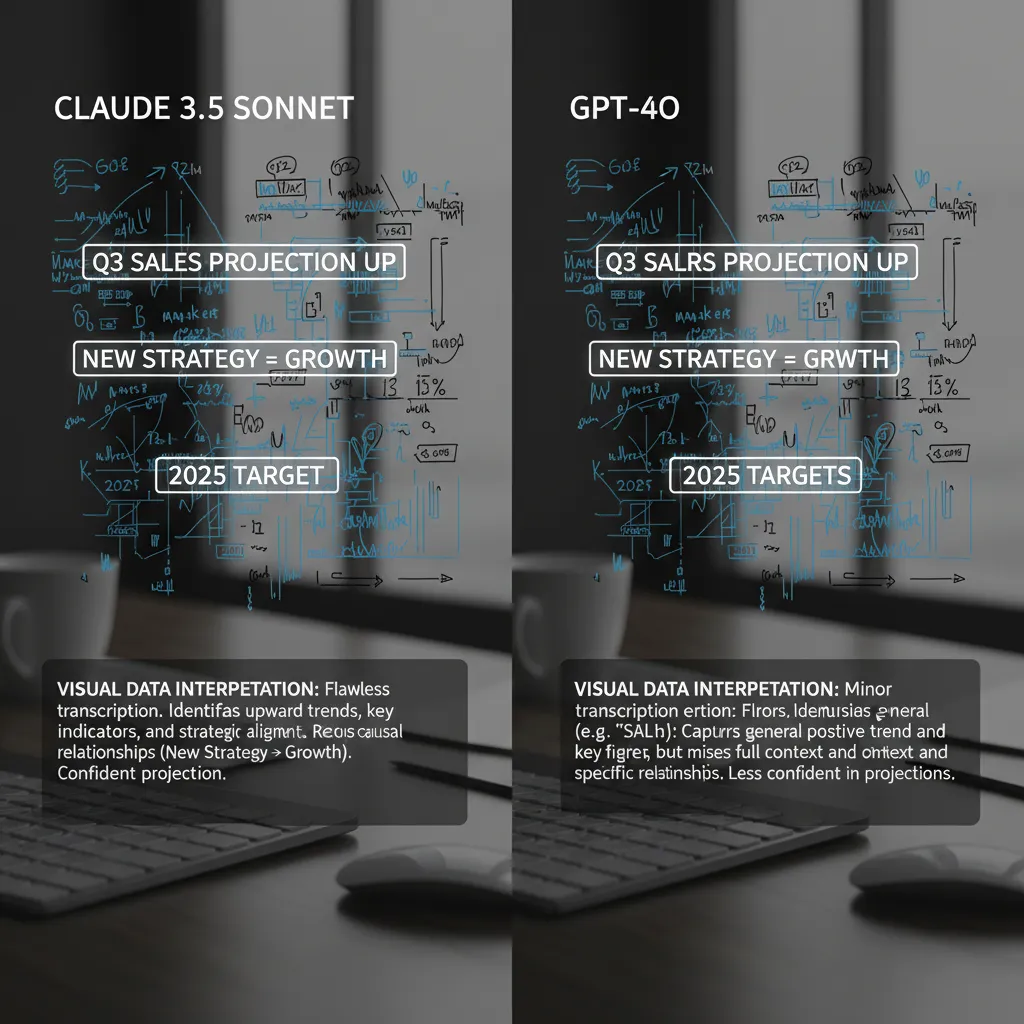

GPT-4o was initially praised for its incredibly fast and insightful vision processing, often identifying objects, text, and context in images with remarkable speed. However, Sonnet 3.5 has elevated the game, particularly in areas requiring fine-grained detail analysis.

Where Claude 3.5 Sonnet truly shines is in its ability to:

- Read and interpret complex charts and graphs: It demonstrates a deeper understanding of trends and data relationships within visualizations.

- Extract text from distorted or low-resolution images: This is crucial for digitizing forms, receipts, or historical documents.

- Analyze architectural or technical blueprints: Identifying specific components and their spatial relationships with high accuracy.

Content Creation and Style Consistency

For writers, marketers, and digital agencies, the quality of generated prose is paramount. Both models are exceptional, but they possess subtle stylistic differences:

- GPT-4o: Tends to be more concise and versatile, adapting easily to different tones, from formal reports to casual social media posts. Its speed makes it perfect for high-volume, rapid drafting.

- Claude 3.5 Sonnet: Often delivers responses characterized by superior depth, nuance, and structural elegance. It is particularly effective for long-form content, narrative writing, and maintaining a consistent, sophisticated brand voice. For AI model for content creation 2024, those prioritizing quality, authority, and minimal post-generation editing often lean toward Sonnet.

[Related: Boosting Productivity: Top AI Tools Revolutionizing Workflows and Creativity]

A Feature Showdown: Artifacts vs. Omni’s Seamlessness

Beyond raw performance, new features define the user experience and expand the utility of these models. Anthropic and OpenAI have focused on divergent, yet equally impactful, user interface enhancements.

Claude 3.5 Artifacts Feature Explained

The Claude 3.5 artifacts feature explained is arguably the most innovative part of the new release. Artifacts essentially turn the chat interface into a live, collaborative workspace.

When Claude generates code, documents, or design elements, these outputs are not just streamed into the chat history; they appear in a dedicated, dynamic window called the “Artifacts” space. This allows users to:

- See a live preview: View a functional website component, a chart, or a document layout in real-time.

- Iterate visually: Request changes to the code or design, and see the Artifact update instantly without having to copy and paste code elsewhere.

- Maintain context: The output remains active and editable separate from the conversational thread.

This feature is transformational for design, development, and data visualization tasks, making the experience less like prompting an AI and more like collaborating with a dedicated digital partner.

GPT-4o’s Focus on Real-Time Interaction

GPT-4o, by contrast, shines in its integration and real-time capabilities. The “Omni” experience is centered around seamless, low-latency interaction, particularly in voice and video modes (though these are often limited to specific applications like ChatGPT desktop).

GPT-4o is built to be a true conversational assistant, handling interruptions, emotional tone, and rapid modality switching with grace. While the Artifacts feature is a major step for persistent work, GPT-4o excels when the environment is fast-paced, verbal, or requires immediate, context-aware responses, solidifying its position as a powerful tool for customer service, real-time translation, and immediate productivity boosts.

[Related: Best AI Tools for Personal Productivity in Daily Life]

Cost-Effectiveness and Pricing Comparison

In the professional realm, the “best” model is often the one that offers the highest performance-to-cost ratio. When comparing the two, we must look at the API pricing for bulk usage, as this is where enterprises and power users incur the majority of their expenses.

The Price Tag: Claude 3.5 Sonnet Pricing vs. GPT-4o

Anthropic positioned Sonnet 3.5 aggressively to capture market share, making it significantly more affordable than the previous flagship, Claude 3 Opus, while matching or exceeding its performance.

| Model | Input Token Price (per 1M tokens) | Output Token Price (per 1M tokens) | Performance Tier |

|---|---|---|---|

| Claude 3.5 Sonnet | ~$3.00 | ~$15.00 | Premium/Flagship |

| GPT-4o | ~$5.00 | ~$15.00 | Premium/Flagship |

| GPT-4 Turbo (Legacy) | ~$10.00 | ~$30.00 | High-End |

Note: Pricing is illustrative and subject to change; based on public API documentation at the time of writing.

The pricing structure clearly shows that for input processing—the bulk of the cost in many applications—Claude 3.5 Sonnet offers a better value proposition. It’s approximately 40% cheaper on input tokens than GPT-4o, while delivering slightly higher benchmark performance.

Value for Enterprise (AI Model Cost-Effectiveness)

The superior AI model cost-effectiveness of Claude 3.5 Sonnet makes a compelling case for large enterprises. For organizations that process massive amounts of data daily—from analyzing customer feedback to generating internal reports—the savings generated by the lower input token cost are enormous.

This advantage, coupled with the enhanced coding and complex reasoning capabilities, means businesses can achieve higher efficiency with a smaller expenditure. This is a critical factor for CIOs and development leads choosing the right AI assistant for scaled operations.

For individual users, while both models offer a limited amount of free access to Claude 3.5 Sonnet and GPT-4o (often with caps or slower speeds), the paid subscriptions (e.g., Claude Pro or ChatGPT Plus) grant access to these top-tier models consistently. Even here, the perceived value of the newly empowered Sonnet is exceptionally high.

Choosing Your AI Assistant: Which Model is the Right Fit?

The title of “AI King” isn’t a single crown but rather a set of specialized crowns for different domains. Both models are groundbreaking, but their strengths lean towards different user types and workflows.

When to Choose Claude 3.5 Sonnet

Choose Claude 3.5 Sonnet if your primary concerns are:

- Accuracy and Deep Reasoning: You require the highest possible performance on academic benchmarks, complex logical tasks, or nuanced data analysis. Use it for research, scientific abstraction, or detailed summarization.

- Coding and Development: You need robust, clean code generation, high performance on the HumanEval benchmark, and advanced tool-use capabilities for building agents. The coding advantage makes it a strong contender for AI for developers.

- Cost Efficiency at Scale: You run high-volume API applications where input token cost is a primary constraint. The Claude 3.5 Sonnet pricing is highly competitive.

- Live Workspace and Iteration: You heavily utilize the visual-output feedback loop offered by the Artifacts feature for design, document creation, or rapid prototyping.

When to Stick with GPT-4o

GPT-4o remains the superior choice in scenarios prioritizing:

- Real-Time Interaction: You rely heavily on seamless, low-latency conversational use, particularly via voice or video, where the model needs to understand tone and react instantaneously.

- Breadth of Ecosystem Integration: If your workflow is deeply integrated into the existing OpenAI ecosystem, custom GPTs, and widely adopted third-party tools, the inertia often favors GPT-4o.

- General Versatility: While Sonnet is technically more intelligent on certain metrics, GPT-4o is arguably the most broadly versatile model, handling text, vision, and audio tasks at a world-class level with exceptional speed.

The Future of AI Models: What’s Next for Anthropic and OpenAI?

The battle between Anthropic vs OpenAI is fundamentally shaping the future of AI models. The release of Claude 3.5 Sonnet demonstrates a critical trend: the democratization of intelligence. Flagship performance is no longer reserved for the most expensive, largest models (like GPT-4 and Claude 3 Opus). Mid-tier models are rapidly closing the performance gap while becoming dramatically more efficient.

We anticipate several key developments as a result of this rivalry:

- Specialization: Future models will likely specialize further. We may see an “Opus” level model from Anthropic that targets a 10-15% advantage over Sonnet, and OpenAI is undoubtedly working on GPT-5 to reclaim the benchmark lead.

- Hybrid Architectures: More companies will explore mixing multimodal capabilities with specialized reasoning cores to optimize cost and performance.

- Focus on Artifacts: The success of the Claude 3.5 artifacts feature explained will likely push competitors to incorporate persistent, interactive workspaces into their own platforms, moving AI from chat assistance to true collaborative work environments.

This intense competition benefits everyone, forcing rapid innovation and driving down the cost of highly intelligent systems. The focus is shifting from “Can the AI do it?” to “How quickly and cheaply can the AI do it?”

[Related: Quantum Computing: Real Impact and Transformative Tech for Future Industries]

Conclusion: The New Standard for Performance

The arrival of Claude 3.5 Sonnet marks a clear inflection point in the LLM race. It is not just an incremental update; it is a disruptive force that has successfully claimed the benchmark crown in several pivotal categories, notably complex reasoning and coding.

While GPT-4o remains an incredibly fast, versatile, and deeply integrated model—and is a superior choice for real-time conversational tasks—for developers, researchers, and enterprises prioritizing raw intelligence, code quality, and AI model cost-effectiveness, Claude 3.5 Sonnet presents a truly compelling case.

Is it the new AI king? In terms of current, independently verified benchmarks for structured tasks, yes, it appears so. Anthropic has redefined what a “mid-tier” model can achieve, providing best-in-class performance at a price that significantly undercuts the competition. As both companies race toward their next major releases, the true winner is the consumer, who now has access to two hyper-intelligent, competitively priced, and immensely powerful intelligent systems comparison options.

The choice is now less about which model is objectively “smarter” and more about which one fits seamlessly into your unique workflow and budget.

FAQs

Q1. What is Claude 3.5 Sonnet?

Claude 3.5 Sonnet is the newest generation mid-tier AI model developed by Anthropic. Launched in mid-2024, it delivers performance metrics that, according to independent benchmarks, surpass those of flagship models like GPT-4 and GPT-4o, particularly in complex reasoning, coding (HumanEval), and visual analysis. It is characterized by its speed, intelligence, and the introduction of the “Artifacts” feature.

Q2. How does Claude 3.5 Sonnet compare to GPT-4o in terms of speed?

In the Claude 3.5 vs GPT-4 Omni speed test, both models are exceptionally fast. GPT-4o is generally lauded for its ultra-low latency in real-time, multimodal conversational scenarios. However, Claude 3.5 Sonnet is highly optimized for rapid output generation for complex, structured text, often delivering entire drafts and code blocks faster than its competitors, providing a significant advantage in productivity workflows.

Q3. Is free access to Claude 3.5 Sonnet available?

Yes, limited free access to Claude 3.5 Sonnet is available through the Claude website and mobile application. This free tier typically allows users a certain number of interactions per day. Power users or developers requiring higher volumes, API access, or the use of more advanced features will need to subscribe to the paid Claude Pro tier.

Q4. Which model is better for coding: GPT-4o or Claude 3.5 Sonnet?

Independent testing and benchmarks like HumanEval suggest that Claude 3.5 Sonnet for coding is currently superior. It demonstrates a higher pass rate, generating cleaner, more idiomatic, and logically sound code than GPT-4o. Developers focused on code generation, debugging, and advanced script writing often prefer Sonnet.

Q5. What is the Claude 3.5 artifacts feature explained?

The Artifacts feature is a new, interactive workspace integrated into the Claude interface. When Claude 3.5 Sonnet generates certain types of outputs (like code, charts, or documents), they appear in a persistent window called an “Artifact,” separate from the chat. This allows users to view, edit, and iterate on the output in a live environment, making the creative and development process more collaborative and visual.

Q6. How does Claude 3.5 Sonnet pricing affect its appeal?

The Claude 3.5 Sonnet pricing strategy is a major competitive advantage. Its API costs for input tokens are significantly lower than GPT-4o’s, offering high performance at a mid-tier price point. This makes Sonnet highly attractive for large-scale enterprise use and high-volume application builders, making it a leader in AI model cost-effectiveness.

Q7. What are the main weaknesses of Claude 3.5 Sonnet?

While highly intelligent, Claude 3.5 Sonnet’s primary weakness is its relative lack of ecosystem ubiquity compared to OpenAI. GPT-4o benefits from deeper integration across a wider array of third-party apps, plugins, and custom GPTs. Additionally, while its reasoning is superior, its real-time voice and video multimodal capabilities are still developing and may not yet match GPT-4o’s seamless, low-latency interaction experience.